I have, for some years now, run an HP Gen8 Microserver as a home server. This has been perfectly fine however 7 years on, its storage capacity is dwindling and its CPU is struggling to keep up with Plex transcoding. As such, I finally figured it’s time to upgrade to something a bit meatier. And meaty is certainly what I ended up with…

The Base Server

The HP ML350 is a tower server. This suits me well as I don’t have the space nor desire for a full sized rack. That said, it’s not small. It measures 45cm high, 22cm wide and a whopping 77cm long (including front bezel).

It’s not a bad server for home use. You can pick them up pretty cheap on eBay (around £200), the power consumption is OK and they’re not too noisy (see power and cooling section below for more info).

The ML350 can be heavily customised when bought new so the spec you end up with is very much down to luck of the eBay gods. Here’s what to look out for:

CPU: The ML350 supports up to two E5-2600 series v3 and v4 CPUs. Most eBay listings I have seen have a single low end E5-2609 v3 or v4. An E5-2609 v3 benchmarks at 4444 on PassMark. You can upgrade this anywhere up to a pair of 22 core E5-2699 v4s which benchmark at a whopping 24834 each – 11x more compute capacity vs the 2609 v3

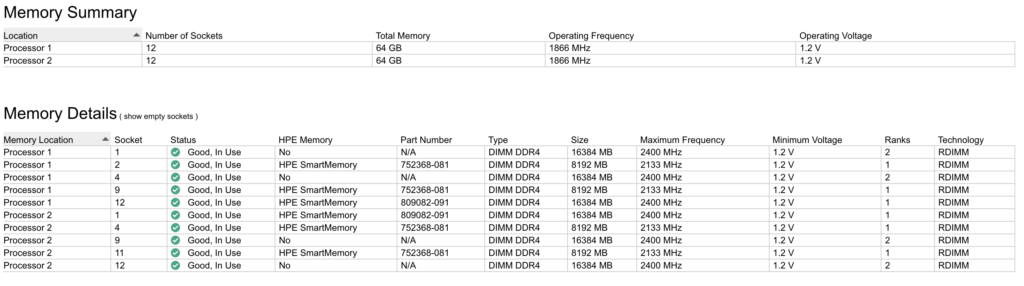

RAM: The server has 12 RAM slots per CPU. For single CPU config, you can install up to 12 DIMMs. For dual CPU, 24 DIMMs. It takes 2133MHz or 2400MHz memory. You’ll probably get 16-64GB on an ex-corporate server from eBay

Network: The ML350 has 4x 1gbit copper network ports. It also has a separate port for ILO management

Remote Management: The ML350 comes with ILO Essentials. Advanced is a license upgrade (see below)

Disk Controller: The server could have a variety of disk controller options when it shipped. You will find some with a Smart Array P440ar RAID controller, others with an H240 HBA (not RAID) and others with only the onboard fake-raid “software” RAID controller. The controller might be as a daughterboard to the motherboard or it might be as a PCI-Express card. You can also have multiple controllers installed. Disk arrangements on ML350 can be complex – see “Disk Configuration” section below for more info

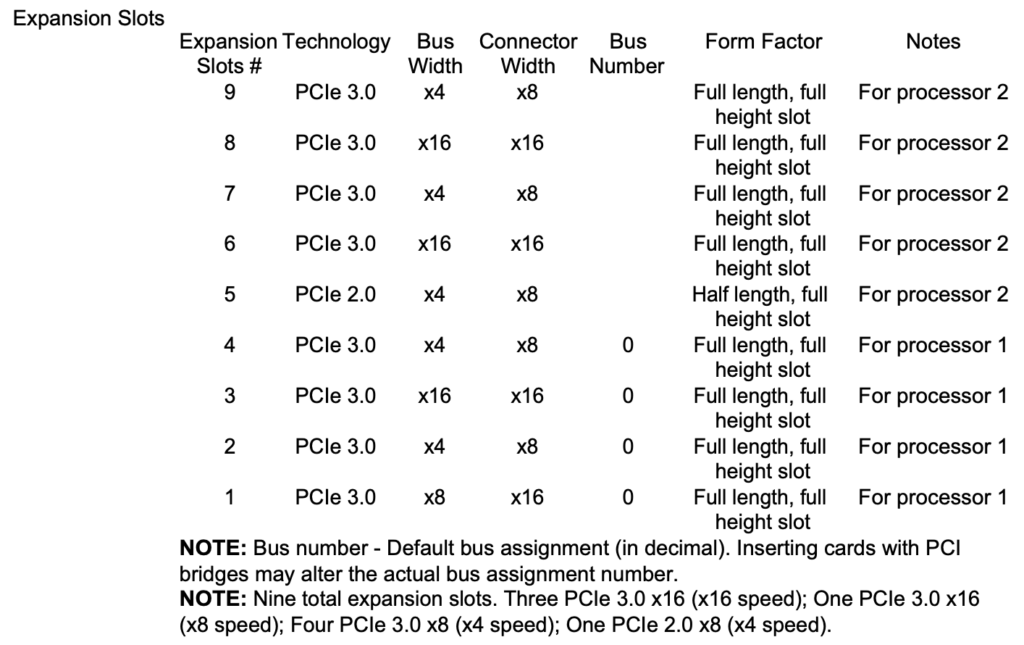

Expansion Cards: The ML350 has nine PCI-E slots. Two 4x slots, one 8x slot, and one 16x slot if you have a single CPU. If you have two CPUs, you’ll also get another three 4x slots, and two 16x slots. Note that slot 5 (a 4x slot) is PCI-E 2.0 whereas the others are all PCI-E 3.0. It has no legacy PCI card slots. Here’s the table of slots from the manual:

PSU: The ML350 supports up to four PSUs. You’ll normally find servers shipping with a single 500W PSU on a backplane which supports up to two PSUs.

Fans: The removable fan bank will usually have 3 fans, in a non-redundant configuration, if you have a single CPU. See power/cooling section below if you want an additional CPU and/or redundant fans.

Maxing your Spec

If you have money to burn, I have another post on how to max out the spec of the ML350 Gen9.

Device Firmware

It’s likely, if you have bought an old server, that the previous owner hasn’t upgraded the firmware for a long time. It’s well worth doing an upgrade before you start.

HP provide Service Packs for ProLiant (SPPs) however, at the time of writing, you need a HP support subscription to download them. For such an old server, this is a bit silly – one might think HP would want to support recycling and reuse. Here’s the official service pack for the Gen9: https://support.hpe.com/hpesc/public/swd/detail?swItemId=MTX_ab56dbf228be4a80adfb576b2a

I have hosted a copy of SPP-Gen9.1.2022_0822.4.iso if you need it. I promise it’s legit, but do please check the SHA256 signature against the ISO on the HP website before you use it!

You don’t seem to be able to write the ISO to USB using belanaEtcher or similar. Not sure why. It’ll boot but then throw an error about a missing partition. Inside the ISO you will find \usb\usbkey\usbkey.exe which can be used on Windows to write the ISO to USB – this worked fine for me.

Disk Configuration

The ML350 supports either Small Form Factor (SFF) 2.5″ disks or Large Form Factor (LFF) 3.5″ disks. The LFF machines seem to be more rare. I ended up buying both as I found one of each for a bargain price and it was cheaper to buy 2 and combine the components than buy one plus the extra components.

In an SFF server, you can run up to 6x banks of 8x 2.5″ drives (48 drives total). In an LFF server, you can run up to 3x banks of 8x 3.5″ drives (24 drives total). You can install 2.5″ drives in LFF (3.5″) bays with an appropriate adapter – just Google “hp gen9 2.5″ adapter”. Obviously you can’t install 3.5″ drives in SFF (2.5″) bays.

The ML350 chassis is broadly identical, except for the front drive bay metalwork. You must buy the correct chassis for your requirements. You cannot easily change an SFF to LFF, or vice versa, by just swapping some parts. The metalwork is riveted together. You also cannot mix SFF and LFF as the metalwork is very different. See photos below for clarity.

RAID Controller / HBA / SAS Expander

The disks connect to the system using internal mini-sas cables. Each mini-sas cable supports up to 4 disks. This means that each bank of 8 drives on the SFF and LFF chassis have 2 mini-sas cables.

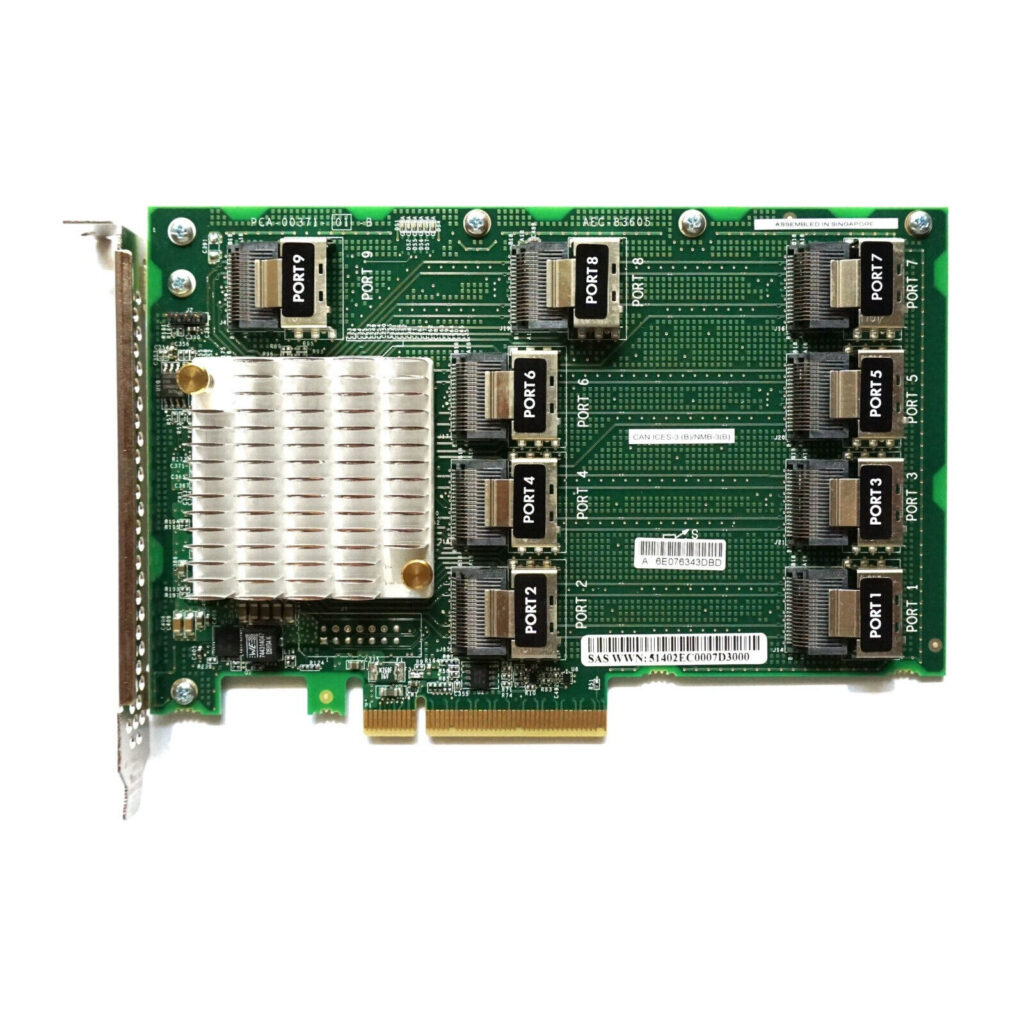

The motherboard’s own “fake raid” has 2 mini-sas ports. This is the same for P440ar daughterboard and H240 PCI-Express HBA. As such, in order to connect more than 8 drives you will need either multiple controllers, if you don’t need a single RAID array for all the drives, or a 12Gbit SAS expander card if you do want a single RAID array. A picture of the SAS expander card is below. It has HPE part numbers 761879-001 and 727250-B21. The SAS expander cannot be connected to H240ar or P440ar controller cards (daughter board type controllers) – your controller must be a HP PCI-E type.

If you want 3 drive bays, you will need a single SAS expander and either a H440 PCI-E RAID Controller (HP part numbers 726823-001 749797-001) or a H240 PCI-E HBA (HP part numbers 779134-001 761873-B21 726907-B21).

If you want more than 3 drive bays (in SFF chassis), you will need two SAS expander cards and either two H440 or H240 controllers, or a single P840 RAID controller (HP part number 761880-001) if you need a single RAID array for all drives.

RAID Battery

The motherboard has a connector for a HPE Smart Storage Battery. This seems like a fairly good system in such that all HPE RAID Controllers on the board can share the same battery. That said, these batteries seem horribly unreliable and don’t last long so you should expect the server you buy to need a new battery. The ILO will make this apparent. You can get unofficial Chinese knock-offs on eBay for about £30.

When changing this battery, ensure the server is unplugged completely. You may need a couple of reboots to get it to detect it properly and stop alerting.

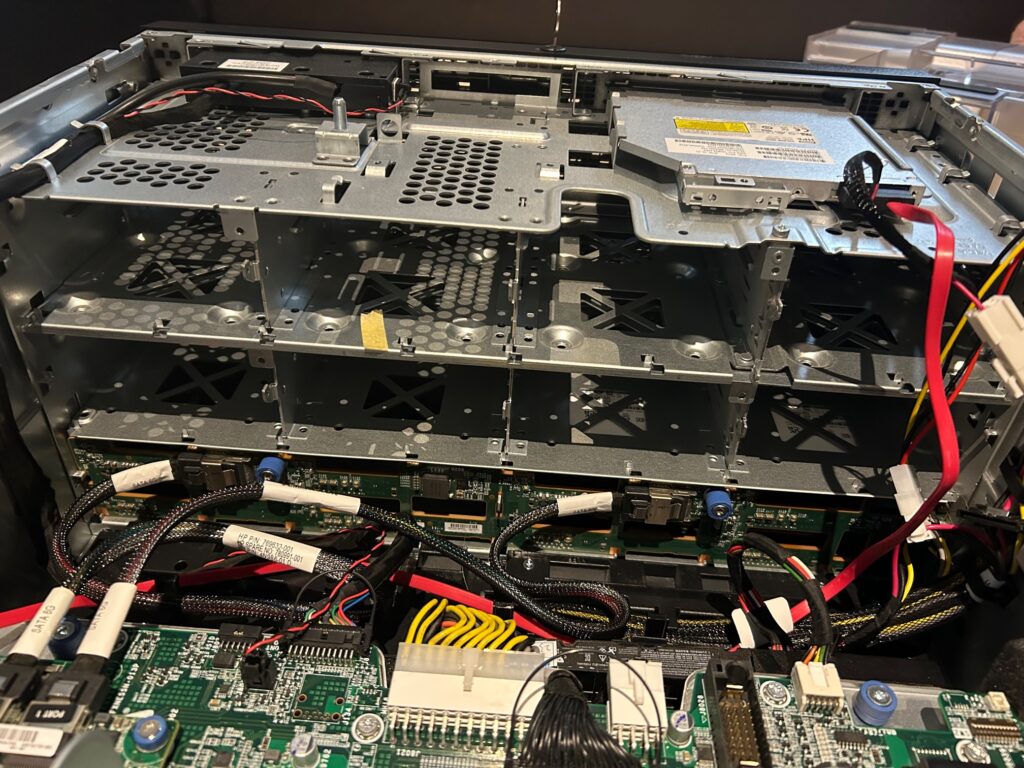

Adding Drives to SFF Chassis

To add more drives to the SFF chassis, you will need metal drive cages with backplanes. The case does not have the ‘slots’ for the drives to go into. You cannot just buy a backplane.

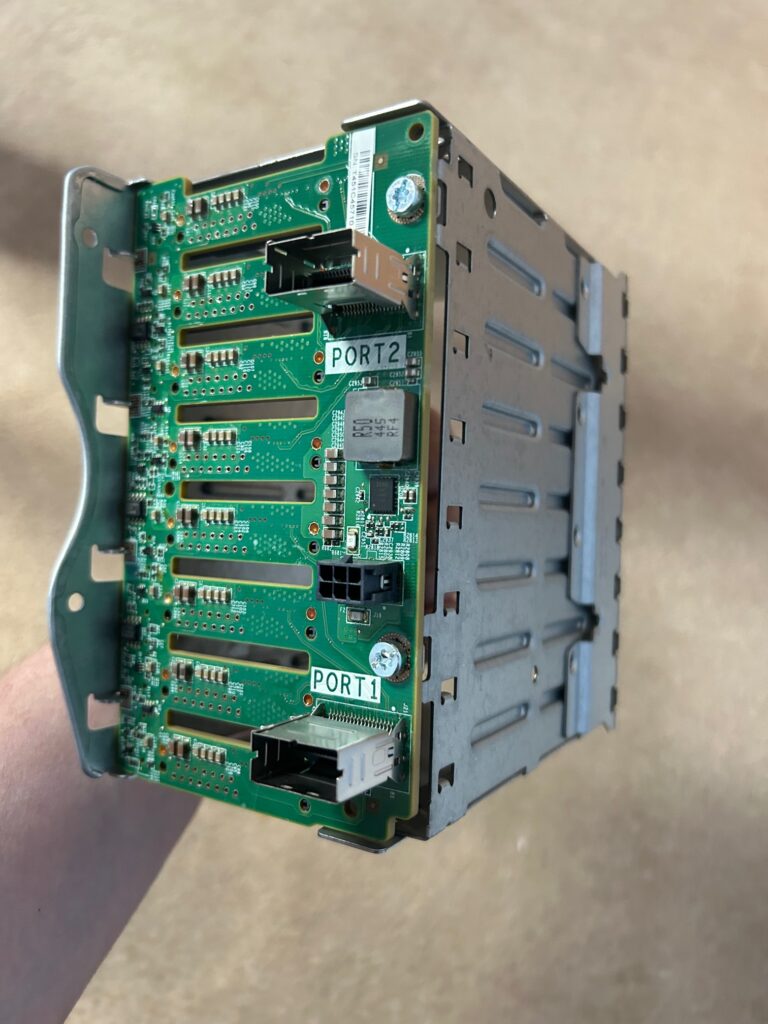

The cage and backplane looks like this:

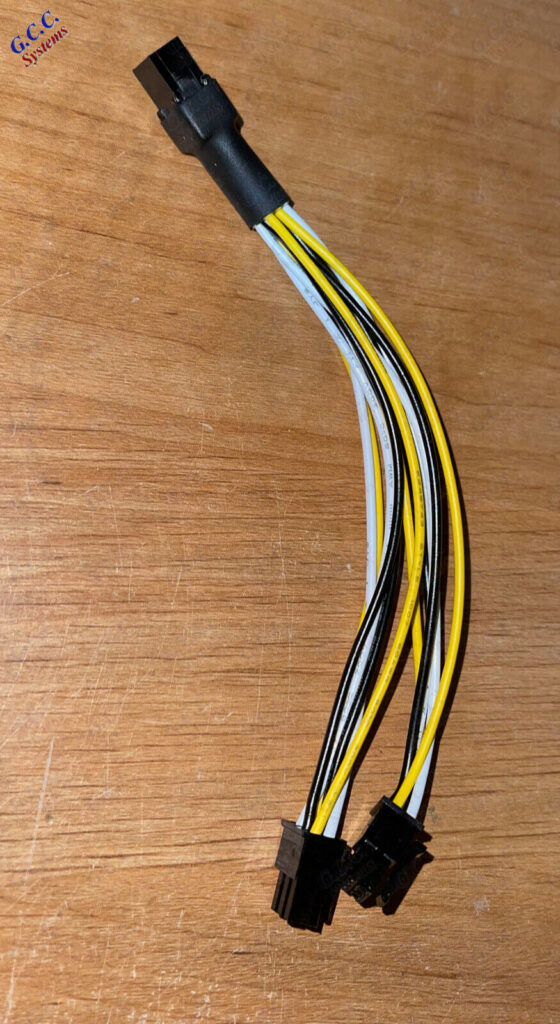

Each bay of 8 drives needs a power connector and both LFF and SFF chassis come with only 3x 6 pin power connectors. This is enough for the LFF chassis but the SFF chassis would need another 3 connectors to fully populate it. You can buy unofficial franken-cables on eBay (search for “HP ML350 Gen9 G9 SFF Power Splitter”). These should work fine as long as your PSU is powerful enough to run all the disks. They look a bit like this:

Alternatively, the official way to do this is to upgrade the power backplane to one which supports 4x PSUs (HP part number 802988-B21). This has an extra 20 pin connector from which you can run the extra 3 drive bays. You can’t just put 2x 2 PSU backplanes in. They don’t fit.

Adding Drives to an LFF Chassis

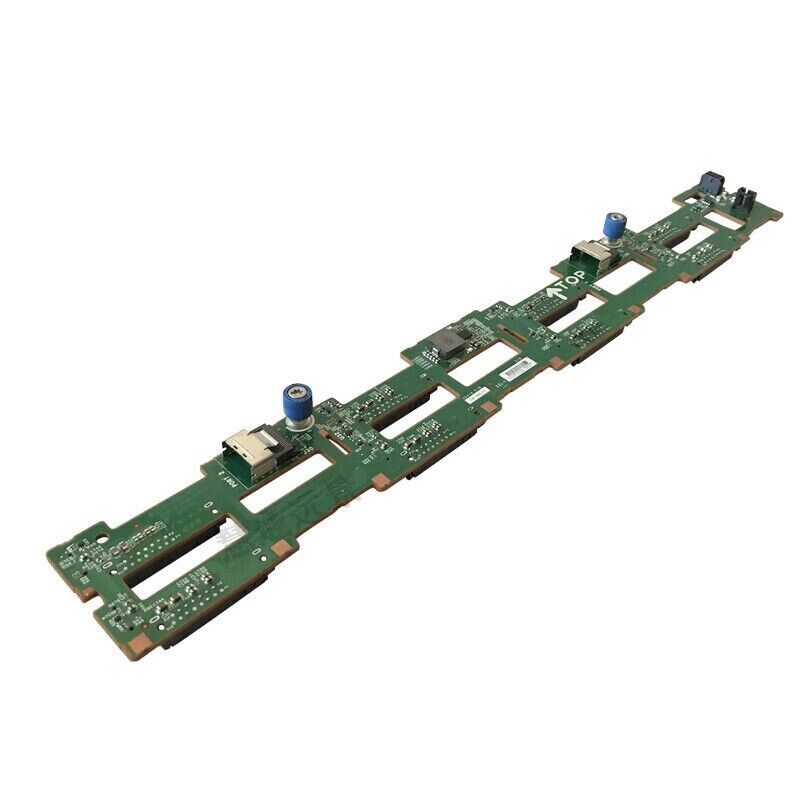

To add drives to the LFF chassis, you just need more backplanes. No additional metalwork is required. Model number seems to be 779083-001. They just screw in with the thumb screws. Picture below:

Doing the Work

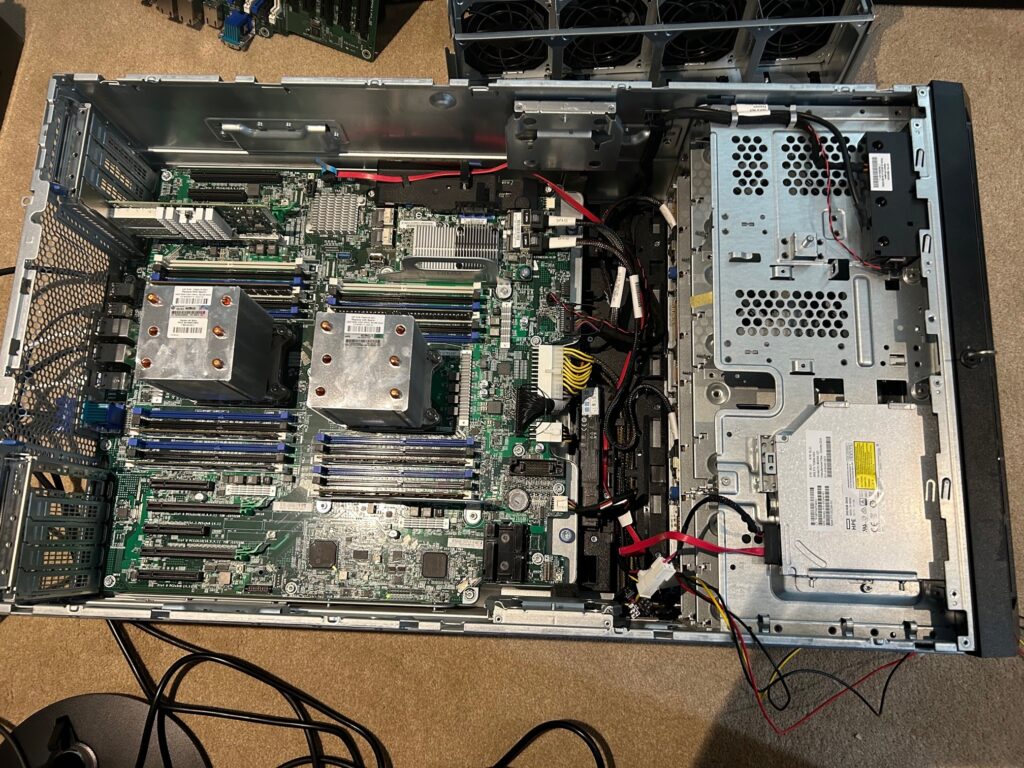

The ML350 servers, like most HPs, are really easy to work on. Once you remove the fans, which is done simply by lifting a lever and removing the full fan tray, you’ll have access to everything. You can even remove the whole motherboard by undoing two thumb screws and sliding it backwards. This gives you access to the power backplane.

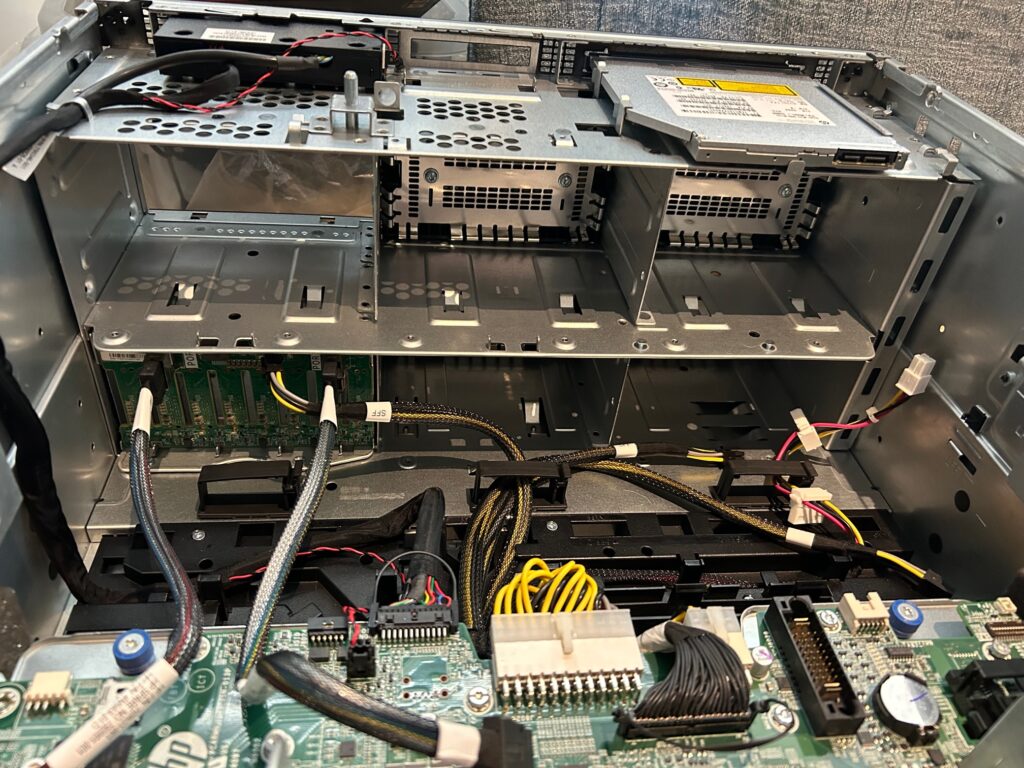

Here’s a photo of the inside with the fan tray and cooling baffle removed:

CPU Upgrade

As mentioned above, the ML350 supports up to two E5-2600 series v3 and v4 CPUs. You can upgrade to any CPU in this range, up to a pair of E5-2699 v4s, to get phenomenal performance. The sweet spot seems to be a pair of e5-2690 v4s which you can get for about £35 each. Anything above that seems rare and expensive. You’ll need to buy an additional heatsink (HP part number 769018-001) and another fan (HP part numbers 768954-001 and 780976-001) if you want 2 CPUs. You’ll also need to either add more RAM or distribute your DIMMs across both CPUs.

RAM Upgrade

As mentioned above, the server has 12 RAM slots per CPU. You can run 2133MHz or 2400MHz RAM. I ended up with a mix of both after consolidating the RAM from the 2 servers I bought, with a total of 128GB.

Be sure to check the “General DIMM slot population guidelines” in the ML350 Gen9 user manual to confirm your configuration is supported.

The RAM has 4 channels. You should fill the slots per their order (A, B, C, D, etc.) as marked on the board. Do pay close attention as they’re not sequentially placed. If you’re mixing quad/dual/single rank DIMMs, you install quad rank first, then dual rank then single rank. Here’s my memory config. Note some of it isn’t HP official looking RAM but it’s broadly the same model numbers and works fine:

HP is picky about its RAM. You should try to use official-looking HP RAM. Micron model numbers starting with MTA18ASF1G72PZ should be ok. That said, as you can see from my config above, it’s fine without it.

Disks

Having an LFF chassis, I installed 2x Evo 850 1TB SATA SSDs (using a 3.5-2.5 adapter) in RAID 1 for the OS and 4x 14TB SAS drives in RAID 10 for the data. The key point here is that you can mix SAS and SATA. I put them on separate ports of the RAID controller but I’m not sure if this matters.

Power/Cooling

As mentioned above, the ML350 Gen9 can have up to four power supplies. You will normally find them with a single 500w power supply and a power backplane which supports up to two PSUs. You can replace the backplane with one which supports 4 PSUs (HP part number 802988-B21). This gives you an extra 20 pin connector such that you can power all 6 drive bays. It also gives you 4x GPU power connectors. You can’t just put 2x 2 PSU backplanes in. They don’t fit.

Here’s a picture of the standard 2 PSU backplane:

You may need to upgrade the PSUs if you’re going to run some beefy GPUs or a bunch of disks – you can buy 800w and 1000w PSUs. Mine runs at 105w when idle (Proxmox booted with no VMs running), 194w with 4 cores running at full and 385w with all cores running full. This was based on a pair of E5-2690 v4, 2x SSDs and 4x 7200 RPM SAS drives.

The ML350 needs 3 fans for single CPU and 4 fans for dual CPU. It can take up to 8 fans with 4 of the fans stacked directly behind the primary fans. This gives you a redundant fan configuration which allows the server to continue operating when a fan breaks. Fans are HP part numbers 768954-001 and 780976-001.

As standard, the fans run extremely quiet. Surprisingly so, in fact. That said, after installing 2x E5-2690 v4 and running them to full load they ran very hot. The rear most CPU (CPU 0) hit 95c, which is alarmingly hot. I tweaked the fans in BIOS to “Increased Cooling”. Fans are slightly more noisy but the CPU sits at around 50c when running at full. To do this: System Configuration > BIOS/Platform Configuration (RBSU) > Advanced Options > Fan and Thermal Options > Thermal Configuration and press Enter.

iLO 5 Advanced

The ML350 comes with the basic ILO, by default. It doesn’t do remote management, remote media, etc. If you look hard enough (or not very hard at all), you might be able to find a way to upgrade it to iLO 5 advanced. This supports full remote administration.

Network

The ML350 has 4x onboard 1gbit copper network interfaces. The abundance of PCI-Express card interfaces lets you add a 10gbit card easily. I picked up a X520-DA2 on eBay for £35 which gives 2 SFP+ ports.

Software

As on the Microserver, I’m running Proxmox for virtualisation. It seems to work fine on this hardware.

Hi Phil,

thanks for writing this blog it was very helpful.

I “bit the bullet and went for a pair of 2699A “Amazon spec” CPU’s, and a 6x NVMe enablement kit.

The CPU’s arrived yesterday and now going over HPE documentation

Customer Advisory (c04849981)

https://support.hpe.com/hpesc/public/docDisplay?docId=c04849981

Which in short says:

1)Ensure the server to be upgraded has the Intel Xeon E5-2600 v3 processors installed.

2)Ensure that the current System ROM is prior to v2.5x.

Caution:

Failure to follow the proper flash setup can cause the flash process to stall and not complete.

For all systems with System ROM version 2.5x or higher, the ROM must first be down-flashed prior to upgrading the ME Firmware. The new ME firmware is required for support of the Intel Xeon E5-2600 v4.

The Intel Xeon E5-2600 v4 processor will not be installed until the end of the upgrade process.

Have just used a USB stick to upgrade SPP, I found your link to it after I had purchased one.

and now I have to down-flash my ROM to 2.42 to upgrade from the 2630 v3 to the 2699A v4.

My question is, did you actually go through this down-flashing when you upgraded to the v4 from v3?

If so do you by any chance have access to the 2.42 version, here’s a link to it:

https://support.hpe.com/connect/s/softwaredetails?softwareId=MTX_417a7a2d833840ed958017648a

I have a home office and bought the ML350 gen9 for is quietness and the 2699A as they have twice the working temp at 145°C

As I have no air conditioning and are not allowed to install an AC unit either.

Regards James

Glad it helped. I didn’t downgrade first but I don’t know what version I was on originally.

I don’t have access to HP support, sadly. SPP linked here was obtained nefariously.

Did you ever get this figured out? I am having trouble with this as well.

We must have different views on OK power consumption for a home server!

105w running constantly is rather high

2.5Kwh a day – roughly £0.44 at current prices. £14ish a month. Awful lot cheaper than renting an equally high spec box in colo. Cheaper than the 4k Netflix package too 😉

That said, if you can get away with a Raspberry Pi or similar, an ML350 is definitely overkill!

The firmware link iso is corrupt when i try to mount it can you post a new link please my server spin up to 80 % fan speed sporadically

Looks alright to me. I just downloaded it and verified the sha256 checksum against the HP website:

VONMVPCVXYVFD:~ PLavin$ shasum -a 256 SPP-Gen9.1.2022_0822.4.isocbd7a2a1d1aa4bbad95797dd281acf89910f9054d36d78857ee3e8c5fe625790 SPP-Gen9.1.2022_0822.4.iso

Brilliant post. The office was clearing out a load of kit so I’ve got power supplies (various), memory (16 x16gb sticks for v3), SPF+/ethernet cards, fans. Now I just need the server itself. Watching ebay and waiting. I’ll be following this post for sure.