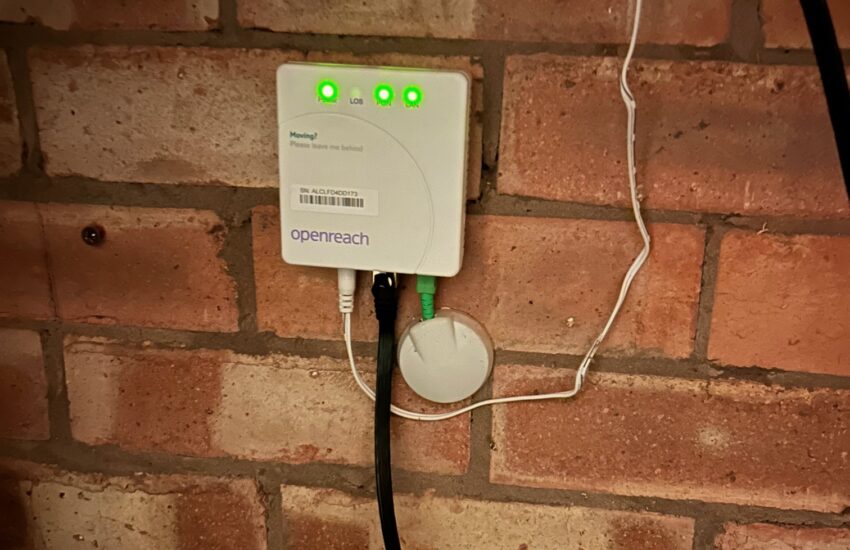

Intro BT OpenReach have fairly recently launched a new FTTP service, which they are offering to ISPs who use the

Continue reading

The thoughts and ramblings of an Engineer

Intro BT OpenReach have fairly recently launched a new FTTP service, which they are offering to ISPs who use the

Continue reading

What’s the beef? It so turns out that most basic WiFi repeaters (i.e. a unit that connects to one WiFi

Continue reading

Intro This is actually a pretty decent access point, hardware wise. It has 2 radios and reasonably high gain external

Continue reading

Intro Due to the high resale value of Defender spare wheels and spare wheel covers, they are very prone to

Continue reading

It seems the Unifi Controller, at time of writing, is horribly outdated. It relies on Mongo DB < 5.0.4.4, which

Continue reading

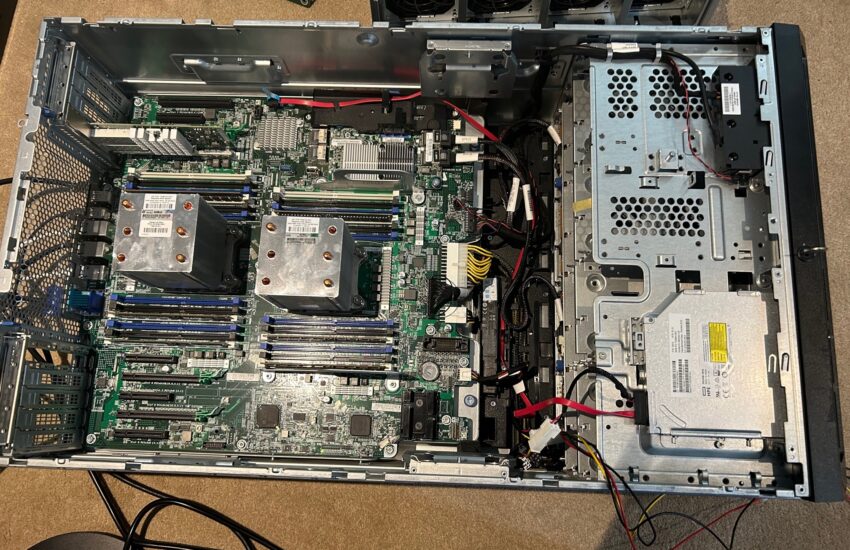

Intro I recently did a post on the ML350 Gen9 as a home server. This server was incredibly configurable when

Continue reading

I have, for some years now, run an HP Gen8 Microserver as a home server. This has been perfectly fine

Continue reading

Intro It feels like quite a common requirement to want to sanitise your Java application logs to remove passwords, PII,

Continue reading

When running php-fpm in a Docker container, it is often desirable to log errors to stderr such that they can

Continue reading

This error has suddenly started appearing on previously working Puppet implementations. git have added a change, in December 2022, in

Continue reading